Embedding Classifier

Learn how to train an embedding classifier using Clarifai Python SDK

An Embedding Classifier is a machine learning model that combines the concept of word embeddings with classification tasks. Word embeddings are dense vector representations of words in a high-dimensional space, typically generated by algorithms like Word2Vec, GloVe, or FastText. You can learn more about Embedding Classifier here.

App Creation

The first part of model training includes the creation of an app under which the training process takes place. Here we are creating an app with the app id as “demo_train” and the base workflow is set as “Universal”. You can change the base workflows to Empty, Universal, Language Understanding, and General according to your use case.

- Python

from clarifai.client.user import User

#replace your "user_id"

client = User(user_id="user_id")

app = client.create_app(app_id="demo_train", base_workflow="Universal")

Dataset Upload

The next step involves dataset upload. You can upload the dataset to your app so that the model accepts the data directly from the platform. The data used for training in this tutorial is available in the examples repository you have cloned.

- Python

#importing load_module_dataloader for calling the dataloader object in dataset.py in the local data folder

from clarifai.datasets.upload.utils import load_module_dataloader

# Construct the path to the dataset folder

CSV_PATH = os.path.join(os.getcwd().split('/models/model_train')[0],'datasets/upload/data/imdb.csv')

# Create a Clarifai dataset with the specified dataset_id

dataset = app.create_dataset(dataset_id="text_dataset")

# Upload the dataset using the provided dataloader and get the upload status

dataset.upload_from_csv(csv_path=CSV_PATH,input_type='text',csv_type='raw', labels=True)

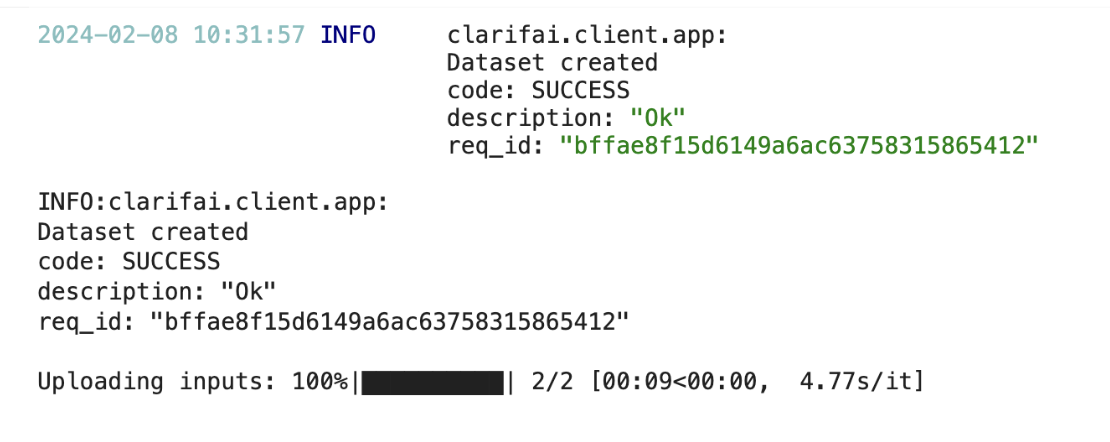

If you have followed the steps correctly you should receive an output that looks like this,

Output

Choose The Model Type

First let's list the all available trainable model types in the platform,

- Python

print(app.list_trainable_model_types())

Output

['visual-classifier',

'visual-detector',

'visual-segmenter',

'visual-embedder',

'clusterer',

'text-classifier',

'embedding-classifier',

'text-to-text']

Click here to know more about Clarifai Model Types.

Model Creation

From the above list of model types we are going to choose embedding-classifier as it is similar to our use case. Now let's create a model with the above model type.

- Python

MODEL_ID = "model_text_embedder"

MODEL_TYPE_ID = "embedding-classifier"

# Create a model by passing the model name and model type as parameter

model = app.create_model(model_id=MODEL_ID, model_type_id=MODEL_TYPE_ID)

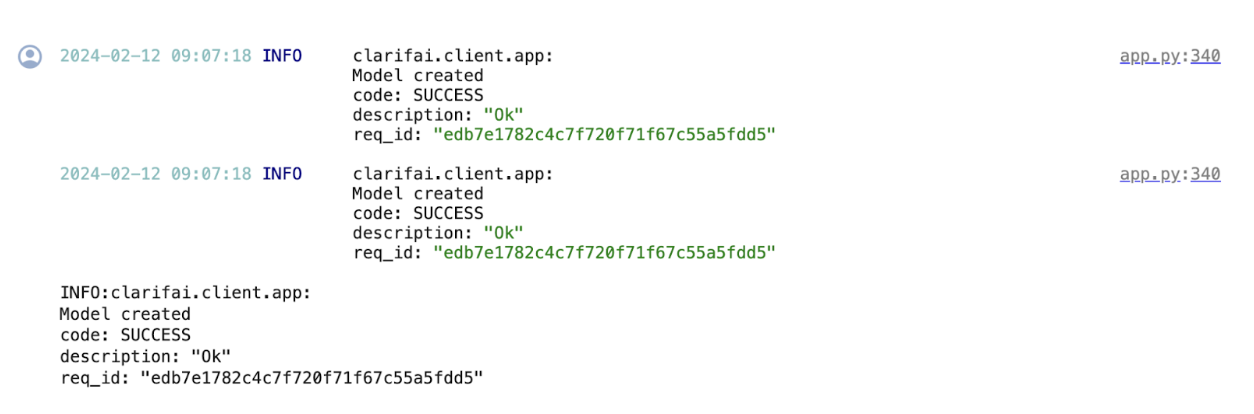

Output

Template Selection

Inside the Clarifai platform there is a template feature. Templates give you the control to choose the specific architecture used by your neural network, as well as define a set of hyperparameters you can use to fine-tune the way your model learns. But when it comes to Embedding Classifier there is only one default template available.

Setup Model Parameters

You can update the model parameters to your needs before initiating training.

- Python

# get the model params for default template

model_params = model.get_params()

concepts = [concept.id for concept in app.list_concepts()]

# update the concept field in model_params

model.update_params(dataset_id = 'text_dataset',concepts = ["id-pos","id-neg"])

Output

{'dataset_id': 'text_dataset',

'dataset_version_id': '',

'concepts': ['id-pos', 'id-neg'],

'train_params': {'base_embed_model': None, 'enrich_dataset': 'Automatic'},

'inference_params': {'min_value': 0.0}}

Initiate Model Training

We can initiate the model training by calling the model.train() method. The Clarifai Python SDK also offers features like showing training status and saving training logs in a local file.

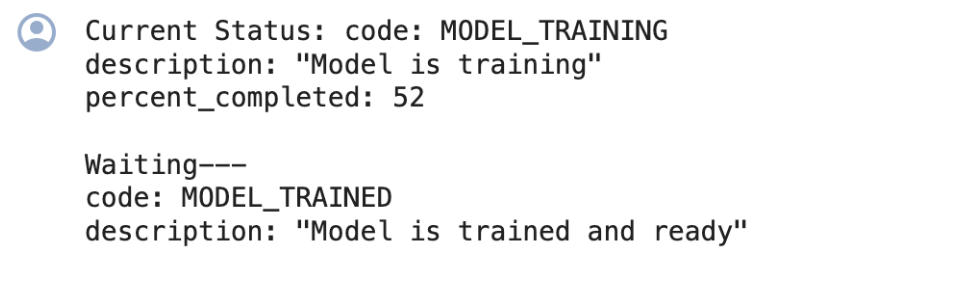

If the status code is 'MODEL-TRAINED', then the user can know the Model is Trained and ready to use.

- Python

import time

#Starting the training

model_version_id = model.train()

#Checking the status of training

while True:

status = model.training_status(version_id=model_version_id,training_logs=False)

if status.code == 21106: #MODEL_TRAINING_FAILED

print(status)

break

elif status.code == 21100: #MODEL_TRAINED

print(status)

break

else:

print("Current Status:",status)

print("Waiting---")

time.sleep(120)

Output

Model Prediction

Since the model is trained and ready let’s run some predictions to view the model performance,

- Python

# Get the predictions

TEXT = b"This is a great place to work"

model_prediction = model.predict_by_bytes(TEXT, input_type="text")

# Get the output

print('Input: ',TEXT)

for concept in model_prediction.outputs[0].data.concepts:

print(concept.id,':',round(concept.value,2))

Output

Input: b'This is a great place to work'

id-neg : 0.72

id-pos : 0.28

Model Evaluation

Now let's evaluate the model using train and test datasets. First let's see the evaluation metrics for the training dataset,

- Python

# Evaluate the model using the specified dataset ID 'text_dataset' and evaluation ID 'one'.

model.evaluate(dataset_id='text_dataset',eval_id='one',eval_info={"use_kfold":False})

# Retrieve the evaluation result for the evaluation ID 'one'.

result = model.get_eval_by_id(eval_id="one")

# Print the summary of the evaluation result.

print(result.summary)

Output

macro_avg_roc_auc: 1.0

macro_avg_f1_score: 1.0

macro_avg_precision: 1.0

macro_avg_recall: 1.0

Before evaluating with a test dataset, we have to first upload the dataset using the data loader and then perform model evaluation,

- Python

#importing load_module_dataloader for calling the dataloader object in dataset.py in the local data folder

from clarifai.datasets.upload.utils import load_module_dataloader

# Construct the path to the dataset folder

CSV_PATH = os.path.join(os.getcwd().split('/models/model_train')[0],'datasets/upload/data/test_imdb.csv')

# Create a Clarifai dataset with the specified dataset_id

test_dataset = app.create_dataset(dataset_id="test_text_dataset")

# Upload the dataset using the provided dataloader and get the upload status

test_dataset.upload_from_csv(csv_path=CSV_PATH,input_type='text',csv_type='raw', labels=True)

# Evaluate the model using the specified test text dataset identified as 'test_text_dataset'

# and the evaluation identifier 'two'.

model.evaluate(dataset_id='test_text_dataset', eval_id='two')

# Retrieve the evaluation result with the identifier 'two'.

result = model.get_eval_by_id("two")

# Print the summary of the evaluation result.

print(result.summary)

Output

macro_avg_roc_auc: 1.0

macro_avg_f1_score: 1.0

macro_avg_precision: 1.0

macro_avg_recall: 1.0

Finally let's compare the results from multiple datasets using EvalResultCompare feature from Clarifai Python SDK to get a better understanding of the model's performance.

- Python

from clarifai.utils.evaluation import EvalResultCompare

# Creating an instance of EvalResultCompare class with specified models and datasets

eval_result = EvalResultCompare(models=[model], datasets=[dataset, test_dataset])

# Printing a detailed summary of the evaluation result

print(eval_result.detailed_summary())

Output

( Concept Accuracy (ROC AUC) Total Labeled Total Predicted True Positives \

0 id-pos 1.0 80 80 80

0 id-neg 1.0 120 120 120

0 id-pos 1.0 31 31 31

0 id-neg 1.0 40 40 40

False Negatives False Positives Recall Precision F1 Dataset

0 0 0 1.0 1.0 1.0 text_dataset2

0 0 0 1.0 1.0 1.0 text_dataset2

0 0 0 1.0 1.0 1.0 test_text_dataset

0 0 0 1.0 1.0 1.0 test_text_dataset , Total Concept Accuracy (ROC AUC) Total Labeled \

0 Dataset:text_dataset2 1.0 200

0 Dataset:test_text_dataset 1.0 71

Total Predicted True Positives False Negatives False Positives Recall \

0 200 200 0 0 1.0

0 71 71 0 0 1.0

Precision F1

0 1.0 1.0

0 1.0 1.0 )